How to Build a Google Meet AI Assistant App in 10 Minutes with Unbody & Appsmith

Goal

Learn how to develop an AI meeting assistant app that processes video recordings from Google Meet, writes notes, captures action items, and generates summaries with almost no code.

Prerequisites

You use Google Meet and meet the requirements to record a video meeting.

You have an Unbody account. Create a new account for free if you do not have it.

You have an Appsmith account. Sign up for a new account.

You forked the GitHub repo.

Overview

Effective communication and efficient meeting management are key to a team’s success in the modern workplace. Recognizing this, we will develop an AI-powered meeting assistant app to transform Google Meet recordings into automatically generated meeting notes with key takeaways and action items. The blog post is tailored for every creator from developers to no-coders who are interested in the intersection of AI and productivity tools. It’s particularly useful for those with limited AI development experience and who want to build AI applications by using simple low-code tools like Unbody and Appsmith.

Introducing the AI-powered meetings assistant app

Think about the app that connects your Google Drive where all your Google Meet video recordings are saved and automatically captures meeting audio transcriptions and generates meeting notes with key points and action items in real-time. You can fully engage in the conversation during the meeting without taking notes alone. If you are running late or can’t make the meeting, the app will still take notes. The app can make virtual meetings more productive including team leaders, project managers, developers, and anyone who regularly uses Google Meet can benefit from using it.

Of course, there are many existing solutions like Otter.ai or Fathom in the market. But in case you want to build a tool yourself and customize the output of it, then you are on the same page as me. To develop this application, we will use Unbody to convert input video transcriptions into intelligence/generative content and Appsmith to make it easy to design and build the UI of our app without extensive front-end coding. Let’s understand the role of each in the app.

Unbody is the brain

Unbody is at the heart of our tool behind knowledge insertion like audio transformation to transcripts and creating AI assistant summaries and knowledge delivery via GraphQL API. Using Unbody’s advanced AI-enabled transformation and content analysis, our project identifies and magically extracts action items from meeting audio of any type. It converts them into structured content ensuring no critical information is missed.

Unbody can also aggregate and synchronize various types of files including text documents, PDFs, spreadsheets, images, and videos. For instance, a PDF file in Google Drive, an image shared in a Slack channel, or video files in a local folder can all be continuously synced to Unbody.

Learn more about Unbody in the article: "All AI buzz in one endpoint, one line of code article" by Amir Houieh.

Appsmith is our frontend

Appsmith is an open-source low-code platform designed to help developers build internal tools quickly and efficiently. It serves as the frontend of our app, providing a customizable and interactive dashboard for viewing meeting summaries and action items. Appsmith connects to the GraphQL exposed by Unbody as a data source, and fetches and displays data in widgets.

Follow this one-click demo link to see the running app on Appsmith Cloud.

How it works

All you need to do are:

- Enable video recording during your Google Meet sessions, and recordings are uploaded automatically to the My Drive > Meet Recordings folder in your Google Drive.

- Connect Google Drive as a content source to Unbody. Unbody fetches the latest changes on your drive upon any change detected. The Unbody’s AI-powered engine processes the content and indexes. For example, we use Unbody to extract key points and decisions from the video transcription.

- Retrieve the result from Unbody’s content API using GraphQL. You write custom GraphQL queries to get a summary of the meeting and identify specific action items. The GraphQL endpoint acts as a data interface between your video recordings in Google Drive and the Appsmith dashboard.

- Access the Appsmith dashboard to view the meeting summary and action items. The dashboard provides a real-time overview of all ongoing tasks and deadlines. The below picture illustrates the dashboard with example data:

Meeting AI Assistant generated report on Appsmith dashboard

See the following GIF to understand the whole process:

Activate a video recording in Google Meet

Once you are in the meeting, start a video recording and transcript in your Google Meet session.

Once the recording is stopped or the meeting ends, it is automatically saved to your Google Drive in a folder labeled “Meet Recordings”.

Video recording is automatically saved to Google Drive

Setting up the Unbody project

Access your Unbody Dashboard and start with creating a new project. First, you might want to configure the AI engines and features.

Unbody Features setting

Unbody uses an advanced AI technology known as large language models (LLMs) to interpret text input. These models come in various types and configurations, and Unbody provides a broad selection. We are going to use two features: text-vectorizer and generative search.

Text vectorizer transforms the transcription of your Google Meet videos into a format understandable to the AI. For the model choice to vectorize transcriptions, I recommend using the open-source and free Contextionary option.

More technical insight about Text Vectorizer

It’s an algorithm that creates a vector representation of transcriptions. The vector representation is just floating point numbers like 5.5, 0.25, and — 1.2. The distance between two vectors measures their relatedness. Small distances suggest high relatedness and large distances suggest low relatedness. Unbody also indexes vector representations for easy search. Think of it as organizing books in a library so they’re easy to find. Learn more about vector indexing here.

After Unbody indexed the data, Unbody offered various generative search engines — currently only ones from OpenAI (ChatGPT) — to enable generative actions on top of your text materials. GPT is very good at understanding and using language in a way that’s similar to humans. The engine helps us to summarize what was discussed in the meeting and identify any tasks or ‘action items’ that need to be done. It’s like having an assistant who listens to your meetings and then tells you the key points and what needs to be done next. Unbody also supports other generative engines in the future, offering you more options to choose from.

Connect to Google Drive and Google Calendar (optional if you also need event details included in the app).

Unbody create a new project with data sources.

After you successfully connect to data sources, you should see Google Drive and Google Calendar in the sources list:

Google Drive and Calendar are chosen data sources for Unbody

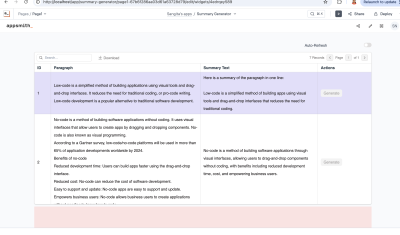

Write GraphQL queries

Unbody has a user-friendly GraphQL playground; you can open the GraphQL tab and try existing queries in the repo that extracts action items or fetches event booking details from the Google Calendar or craft your new queries.

Writing custom queries on Unbody GraphQL playground

Setting up the frontend with Appsmith

Next, you bring the existing Appsmith application from the GitHub repository. You import it to a new workspace in your Appsmith account. Follow the steps given in the Import From Repository on the Appsmith website.

You can also install Appsmith using Docker on your local machine in addition to use cloud version of it.

Once the import is complete, you’ll see the canvas similar to this:

Example of Appsmith canvas to build UI apps

You can use the drag-and-drop interface to customize the dashboard. Modify or add widgets like tables, text boxes, and buttons as needed. Note that Appsmith doesn’t export any secret configuration or header values used for connecting a data source such as Unbody

API_KEYandPROJECT_ID. You need to find your personal API key and project ID generated in the Unbody Dashboard and configure manually in the data source headers similar to this:Connect Unbody as a data source to Appsmith

As you can see, the project sets up a data source in Appsmith to connect to your Unbody GraphQL server. Use this to fetch meeting summaries and display them in the dashboard. Other API queries, UI pages, and widgets are automatically created after the import.

Register Unbody GraphQL queries for Appsmith

You can run the app by clicking the Preview button on the top right of the screen and finally, you see the dashboard with all the data:

Conclusion

You now have a fully functional application that can transform Google Meet video recordings into actionable summaries and tasks. AI-powered meeting report app is a good example of turning any content into an intelligible and queryable knowledge base. You utilized a RAG (Retrieval-Augmented Generation) approach to deliver an intuitive and powerful content interaction platform through a single GraphQL endpoint. Also, using an Appsmith’s low-code drag-and-drop interface, significantly reduced the time and effort typically required for such a full-stack task. For more advanced functionalities, both Unbody and Appsmith allow the use of JavaScript and TypeScript, giving developers the flexibility to write custom logic.

Next steps

This setup guide provides a basic framework, which you can expand and customize according to your specific requirements. In the application, you noticed that there is another non-finished page called *Ask Meeting Notes.* Apply the knowledge you learned in this article and implement a new GraphQL query using the Generative Q&A feature, bringing data to the output text widget. Users can search for specific information from the meetings in the search bar.

About the author

Visit my blog: www.iambobur.com